Last year, the GSMA Inclusive Tech Lab launched a new platform aimed to help financial services providers test their interoperability processes: the Interoperability Test Platform. The platform allows the industry to test their own systems across different use cases but was not originally designed for use by one of the most important players in interoperability: hub operators. This latest release aims to rectify this issue.

Interoperability hub operators face many challenges when onboarding new participants. To maintain the overall health of the network, it is critical to ensure that new participants follow correct processes with respect to the interoperability protocol. If one participant has an incorrect implementation, confidence in the network can be significantly damaged. This careful process can be time-consuming, even to simply establish network connectivity between the hub and the new participant. This connectivity is especially troublesome when tough security requirements make it difficult to see what is going wrong. Finally, different participants can have subtly different requirements, and it is important for onboarding processes to have sufficient flexibility to handle these cases. These three problems of correctness, connectivity and flexibility are tackled by the latest release of the test platform.

Ensuring Correctness

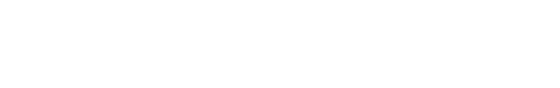

Previous versions of the platform focused on self-testing workflows to help developers who wanted to gain confidence in their own implementation. This release introduces “certification sessions”, which can be used by a hub operator to ensure that participants’ implementations are correct. The test results from a certification session can be explicitly shared with a platform administrator, allowing the hub operator to review and either approve or reject the submission. This workflow frees hub operators from being directly involved in the testing process, by allowing the participants themselves to take responsibility for their test results.

To ensure that the correct procedures are followed by the test participants, the selection of test cases in a certification session needs to be set by the hub operator rather than allowing free choice by the participant. This is achieved through an integrated questionnaire, which uses participants’ answers to automatically select the most appropriate set of test cases. Finally, to guard against fraudulent submissions, the number of execution attempts for each test case can be limited in a certification session. The automated testing process afforded by these new features is already being put into practice as part of the GSMA Mobile Money API Compliance Verification Service.

Simplifying Connectivity

Since the initial version of the test platform was intended for use in development environments, no security features were included beyond the validation of simple token-based authentication systems, which used standard HTTP headers. Hub operators need to ensure that security best practices are followed in every environment, however, and so recent releases have introduced new features to validate this.

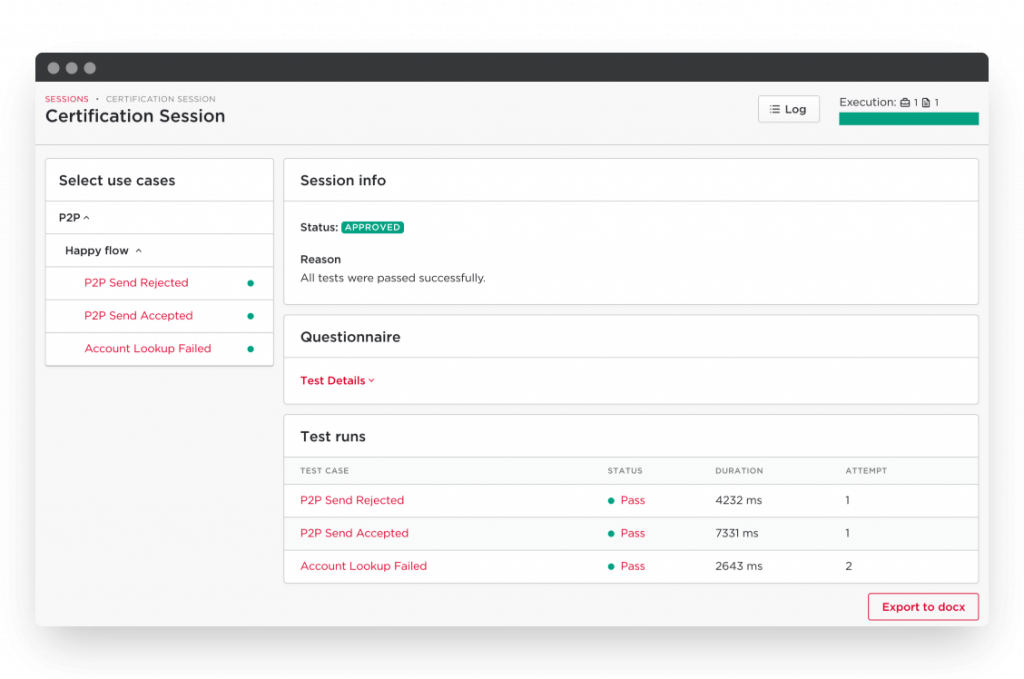

When a new session is created, participants will now be presented with the option to encrypt their connection using mutual TLS. When this option is selected, the test platform will perform client certificate validation as part of the test execution. This setting can be toggled at any time, allowing the encryption to be temporarily disabled to debug a troublesome connection. Furthermore, the platform acts as a proxy between the hub and the participant, allowing the encryption to be controlled separately for each leg so that the connectivity settings within the hub do not need modification at any stage.

In addition to encrypted connection, we have also added additional validation options for JSON Web Signatures (JWS) to ensure unforgeable messaging, and ILP packets which guarantee irrevocable transactions in the Mojaloop FSPIOP API. These validators ensure that the security configuration is correctly set up at the earliest point of onboarding, to reduce the risk of security errors at a later stage.

Maintaining Flexibility

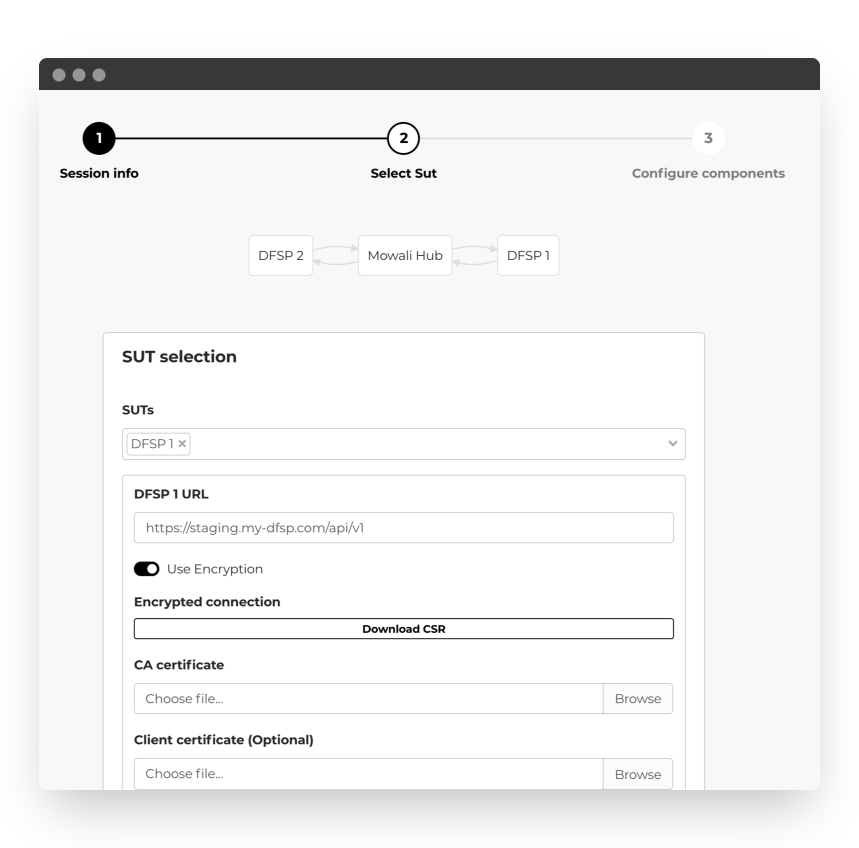

Finally, the latest release of the test platform includes a significant overhaul of the internal simulators. Where simulator behaviour was previously controlled by hand-coded logic external to the platform, it is now possible for platform operators to change this behaviour by adjusting the test cases themselves. This is important to allow hub operators to test unique behaviours on an ad-hoc basis when onboarding a new operator. For example, to simulate the behaviour of an unresponsive remote participant, it is simply a matter of duplicating an existing test case, and then removing the messages which are “dropped” by the remote participant. This feature is enabled by the powerful Twig templating language, which allows dynamic behaviour – such as on-the-fly calculation of transaction fees – to be described within the test case file.

In order to expose this powerful ability to a greater range of users, we have also introduced a visual editor for test case authors. Instead of manually editing a YAML source file, it is now possible to edit and create test cases directly within the platform through a visual interface. In addition to this, the platform now maintains a full version history for test cases, allowing test case creators to easily introduce new versions of test cases without interfering with any test sessions already in progress.

Live Demonstration

To demonstrate how the Interoperability Test Platform presents a solution to the challenges of onboarding, we have recorded a video demonstrating how a fictional hub operator – ColorHub – might approach onboarding a new participant to the network.

The GSMA Interoperability Test Platform is still open for free registration: sign up online or run the code for yourself to see for yourself how useful the platform can be!